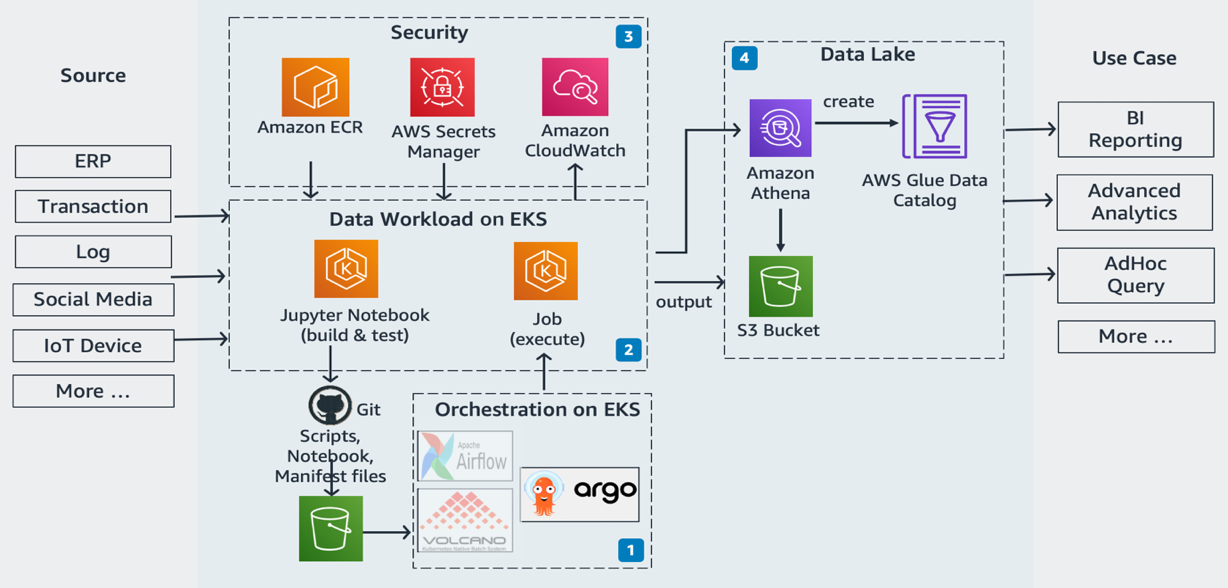

We accomplish this as follows: 1) applying separation of concerns (SoC) design principle to data lake infrastructure and ETL jobs via dedicated source code repositories, 2) a centralized deployment model utilizing CDK pipelines, and 3) AWS CDK enabled ETL pipelines from the start. Our goal is to implement a CI/CD solution that automates the provisioning of data lake infrastructure resources and deploys ETL jobs interactively. This simplifies usage and streamlines implementation.īefore exploring the implementation, let’s gain further scope of how we utilize our data lake. The AWS CDK offers high-level constructs for use with all of our data lake resources. Applying the IaC principle for data lakes brings the benefit of consistent and repeatable runs across multiple environments, self-documenting infrastructure, and greater flexibility with resource management. Finally, we engage various AWS services for logging, monitoring, security, authentication, authorization, alerting, and notification.Ī common data lake practice is to have multiple environments such as dev, test, and production. Amazon Athena is used for interactive queries and analysis. AWS Lambda and AWS Step Functions schedule and orchestrate AWS Glue extract, transform, and load (ETL) jobs. AWS Glue Data Catalog persists metadata in a central repository. We utilize the following tools: AWS Glue processes and analyzes the data.

The data lake has a producer where we ingest data into the raw bucket at periodic intervals. purpose-built – Stores the data that is ready for consumption by applications or data lake consumers.conformed – Stores the data that meets the data lake quality requirements.raw – Stores the input data in its original format.

We use three Amazon Simple Storage Service (Amazon S3) buckets: The following figure represents our data lake.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed